Download SnowPro Advanced-Architect Certification.ARA-C01.ExamTopics.2026-03-20.157q.tqb

| Vendor: | Snowflake |

| Exam Code: | ARA-C01 |

| Exam Name: | SnowPro Advanced-Architect Certification |

| Date: | Mar 20, 2026 |

| File Size: | 1 MB |

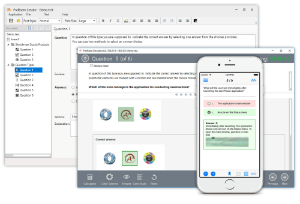

How to open TQB files?

Files with TQB (Taurus Question Bank) extension can be opened by Taurus Exam Studio.

Purchase

Coupon: TAURUSSIM_20OFF

Discount: 20%

Demo Questions

Question 1

An Architect wants to stream website logs near real-time to Snowflake and has decided to use Snowflake Connector for Kafka.

What characteristics should the Architect consider regarding the different ingestion methods? (Choose two.)

- Snowpipe Streaming is the default ingestion method.

- Snowpipe Streaming supports schema detection.

- Snowpipe has a lower latency than Snowpipe Streaming.

- Snowpipe Streaming automatically flushes data every one second.

- Snowpipe can handle jumps or resetting offsets by default.

Correct answer: A, E

Question 2

An Architect executes the following statements in order:

create table emp(id integer);

insert into emp values (1),(2);

create temporary table emp(id integer);

insert into emp values (1) ;

The Architect then executes the following statements:

select count(*) from emp;

drop table emp;

select count(*) from emp;

What will be the result?

- COUNT (*)2COUNT (*)1

- COUNT (*)1COUNT (*)2

- COUNT (*)2COUNT (*)2

- The final query will result in an error.

Correct answer: D

Question 3

An Architect needs to ensure that users are able to upload data from Snowsight into an existing table.

What privileges need to be granted to the users to accomplish this? (Choose three.)

- Database: USAGE

- Database: OWNERSHIP

- Schema: CREATE TABLE

- Schema: USAGE

- Table: SELECT

- Table: OWNERSHIP

Correct answer: A, D, E

Question 4

An Architect has implemented the search optimization service for a table. A user adds a new column to the table and there is a decrease in query performance.

The Architect then executes the DESCRIBE SEARCH OPTIMIZATION command and finds that the newly-added column was not included in the search access path.

Why did this occur?

- The new column has a data type that is not supported by the search optimization service.

- The new column is automatically included in the search access path, but there is a time delay before it takes effect.

- The ON clause was used when enabling the search optimization service, which does not automatically include new columns.

- The SEARCH OPTIMIZATION property needs to be dropped and then added back for the changes to take effect.

Correct answer: C

Question 5

Three tables (A, B and C) are loaded with an INSERT statement. Each INSERT statement is executed by a separate Snowflake task: TASK_A, TASK_B, or TASK_C. All tasks are scheduled for a daily execution at the same time. TASK_C has the longest average execution duration.

How can an Architect create an aggregated data object based on a join of tables A, B and C?

- Create a materialized view based on a join of tables A, B and C.

- Create a task, TASK_D, to join tables A, B and C and INSERT the data into a target table. Set the predecessor of TASK_D to TASK_C.

- Create a task, TASK_D, to join tables A, B and C and INSERT the data into a target table. Set the predecessor of TASK_D to TASK_A, TASK_B, and TASK_C.

- Create a materialized view based on a join of tables A, B and C. Create TASK_D to execute a refresh of the materialized view once each hour.

Correct answer: C

Question 6

An Architect is designing a single account strategy so that all the environments (production, test, and development) reside in the same account for simplified management.

What are the advantages of this strategy over a multi-account strategy? (Choose two.)

- It is easy to reference production data during the development cycle.

- It is easy to create secure shares and share them internally within the same account.

- Objects may be promoted between environments through the use of zero-copy cloning.

- All objects reside within one account with one set of Role-Based Access Control (RBAC) privileges and one location for all data.

- Managing production and non-production environments in the same account eliminates the need to make modifications to the development cycle when promoting code through the environments.

Correct answer: C, D

Question 7

A company has a single Snowflake account for both its production and non-production environments. An Architect needs to design a solution defining the different database layers with a process that also includes a periodic data refresh from production to non-production with data governance in place to protect columns that contain sensitive data.

How should the layers be configured, and what process should be followed?

- Database layers: RAW->Presentation->IntegrationSchedule tasks that share the identified tables using data sharing from production to non-production. Define Dynamic Data Masking on columns with sensitive data in the production environment, based on the defined roles.

- Database layers: RAW->Presentation->IntegrationSchedule tasks that clone the identified tables from production to non-production. Define Dynamic Data Masking on the columns that have sensitive data in the production environment, based on the defined roles.

- Database layers: RAW->Integration->PresentationSchedule tasks that clone the identified tables from production to non-production. Define Dynamic Data Masking on columns that have sensitive data in the production environment, based on the defined roles.

- Database layers: RAW->Integration->PresentationSchedule tasks that clone the identified tables from production to non-production. Define row access policies on the columns that have sensitive data in the production environment, based on the defined roles.

Correct answer: C

Question 8

A company has a very large table that is being queried by two groups. Each group uses unique WHERE clauses in their queries. The first group consistently achieves high-performance results, while the second group experiences poor query performance.

Analysis of the Query Profile reveals that the first group’s queries access a limited number of micro-partitions, in contrast to the second group’s queries that scan all micro-partitions in the table.

What should be done to ensure that performance is MAXIMIZED for both sets of queries?

- Create a materialized view of the table and assign a cluster key to the view that supports the WHERE clause of the poorly-performing queries.

- Clone the table and assign a cluster key to the cloned table that supports the WHERE clause of the poorly-performing queries.

- Modify the table using the CLUSTER BY parameter, including all columns that are referenced in both groups’ WHERE clauses.

- Have the second group increase the size of the virtual warehouse when running queries.

Correct answer: B

Question 9

An Architect has designed a data pipeline that is receiving small CSV files from multiple sources. All of the files are landing in one location. Specific files are filtered for loading into Snowflake tables using the COPY command. The loading performance is poor.

What changes can be made to improve the data loading performance?

- Increase the size of the virtual warehouse.

- Create a multi-cluster warehouse and merge smaller files to create bigger files.

- Create a specific storage landing bucket to avoid file scanning.

- Change the file format from CSV to JSON.

Correct answer: B

Question 10

An Architect needs to improve the performance of reports that pull data from multiple Snowflake tables, join, and then aggregate the data. Users access the reports using several dashboards. There are performance issues on Monday mornings between 9:00am-11:00am when many users check the sales reports.

The size of the group has increased from 4 to 8 users. Waiting times to refresh the dashboards has increased significantly. Currently this workload is being served by a virtual warehouse with the following parameters:

AUTO-RESUME = TRUE -

AUTO_SUSPEND = 60 -

SIZE = Medium -

What is the MOST cost-effective way to increase the availability of the reports?

- Use materialized views and pre-calculate the data.

- Increase the warehouse to size Large and set AUTO_SUSPEND = 600.

- Use a multi-cluster warehouse in maximized mode with 2 size Medium clusters.

- Use a multi-cluster warehouse in auto-scale mode with 1 size Medium cluster, and set MIN_CLUSTER_COUNT = 1 and MAX_CLUSTER_COUNT = 4.

Correct answer: D

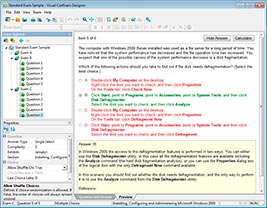

HOW TO OPEN VCE FILES

Use VCE Exam Simulator to open VCE files

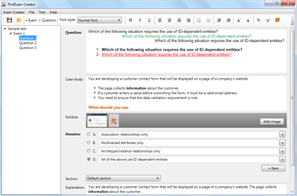

HOW TO OPEN VCEX FILES

Use ProfExam Simulator to open VCEX files

ProfExam at a 20% markdown

You have the opportunity to purchase ProfExam at a 20% reduced price

Get Now!