Download AWS Certified Machine Learning - Specialty.MLS-C01.ExamTopics.2026-04-26.369q.tqb

| Vendor: | Amazon |

| Exam Code: | MLS-C01 |

| Exam Name: | AWS Certified Machine Learning - Specialty |

| Date: | Apr 26, 2026 |

| File Size: | 4 MB |

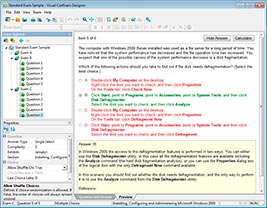

How to open TQB files?

Files with TQB (Taurus Question Bank) extension can be opened by Taurus Exam Studio.

Purchase

Coupon: TAURUSSIM_20OFF

Discount: 20%

Demo Questions

Question 1

A machine learning (ML) engineer is using Amazon SageMaker automatic model tuning (AMT) to optimize a model's hyperparameters. The ML engineer notices that the tuning jobs take a long time to run. The tuning jobs continue even when the jobs are not significantly improving against the objective metric.

The ML engineer needs the training jobs to optimize the hyperparameters more quickly.

How should the ML engineer configure the SageMaker AMT data types to meet these requirements?

- Set Strategy to the Bayesian value.

- Set RetryStrategy to a value of 1.

- Set ParameterRanges to the narrow range Inferred from previous hyperparameter jobs.

- Set TrainingJobEarlyStoppingType to the AUTO value.

Correct answer: D

Question 2

A banking company provides financial products to customers around the world. A machine learning (ML) specialist collected transaction data from internal customers. The ML specialist split the dataset into training, testing, and validation datasets. The ML specialist analyzed the training dataset by using Amazon SageMaker Clarify. The analysis found that the training dataset contained fewer examples of customers in the 40 to 55 year-old age group compared to the other age groups.

Which type of pretraining bias did the ML specialist observe in the training dataset?

- Difference in proportions of labels (DPL)

- Class imbalance (CI)

- Conditional demographic disparity (CDD)

- Kolmogorov-Smirnov (KS)

Correct answer: B

Question 3

A bank has collected customer data for 10 years in CSV format. The bank stores the data in an on-premises server. A data science team wants to use Amazon SageMaker to build and train a machine learning (ML) model to predict churn probability. The team will use the historical data. The data scientists want to perform data transformations quickly and to generate data insights before the team builds a model for production.

Which solution will meet these requirements with the LEAST development effort?

- Upload the data into the SageMaker Data Wrangler console directly. Perform data transformations and generate insights within Data Wrangler.

- Upload the data into an Amazon S3 bucket. Allow SageMaker to access the data that is in the bucket. Import the data from the S3 bucket into SageMaker Data Wrangler. Perform data transformations and generate insights within Data Wrangler.

- Upload the data into the SageMaker Data Wrangler console directly. Allow SageMaker and Amazon QuickSight to access the data that is in an Amazon S3 bucket. Perform data transformations in Data Wrangler and save the transformed data into a second S3 bucket. Use QuickSight to generate data insights.

- Upload the data into an Amazon S3 bucket. Allow SageMaker to access the data that is in the bucket. Import the data from the bucket into SageMaker Data Wrangler. Perform data transformations in Data Wrangler. Save the data into a second S3 bucket. Use a SageMaker Studio notebook to generate data insights.

Correct answer: B

Question 4

A company maintains a 2 TB dataset that contains information about customer behaviors. The company stores the dataset in Amazon S3. The company stores a trained model container in Amazon Elastic Container Registry (Amazon ECR).

A machine learning (ML) specialist needs to score a batch model for the dataset to predict customer behavior. The ML specialist must select a scalable approach to score the model.

Which solution will meet these requirements MOST cost-effectively?

- Score the model by using AWS Batch managed Amazon EC2 Reserved Instances. Create an Amazon EC2 instance store volume and mount it to the Reserved Instances.

- Score the model by using AWS Batch managed Amazon EC2 Spot Instances. Create an Amazon FSx for Lustre volume and mount it to the Spot Instances.

- Score the model by using an Amazon SageMaker notebook on Amazon EC2 Reserved Instances. Create an Amazon EBS volume and mount it to the Reserved Instances.

- Score the model by using Amazon SageMaker notebook on Amazon EC2 Spot Instances. Create an Amazon Elastic File System (Amazon EFS) file system and mount it to the Spot Instances.

Correct answer: B

Question 5

A data scientist is implementing a deep learning neural network model for an object detection task on images. The data scientist wants to experiment with a large number of parallel hyperparameter tuning jobs to find hyperparameters that optimize compute time.

The data scientist must ensure that jobs that underperform are stopped. The data scientist must allocate computational resources to well-performing hyperparameter configurations. The data scientist is using the hyperparameter tuning job to tune the stochastic gradient descent (SGD) learning rate, momentum, epoch, and mini-batch size.

Which technique will meet these requirements with LEAST computational time?

- Grid search

- Random search

- Bayesian optimization

- Hyperband

Correct answer: D

Question 6

A company that operates oil platforms uses drones to photograph locations on oil platforms that are difficult for humans to access to search for corrosion.

Experienced engineers review the photos to determine the severity of corrosion. There can be several corroded areas in a single photo. The engineers determine whether the identified corrosion needs to be fixed immediately, scheduled for future maintenance, or requires no action. The corrosion appears in an average of 0.1% of all photos.

A data science team needs to create a solution that automates the process of reviewing the photos and classifying the need for maintenance.

Which combination of steps will meet these requirements? (Choose three.)

- Use an object detection algorithm to train a model to identify corrosion areas of a photo.

- Use Amazon Rekognition with label detection on the photos.

- Use a k-means clustering algorithm to train a model to classify the severity of corrosion in a photo.

- Use an XGBoost algorithm to train a model to classify the severity of corrosion in a photo.

- Perform image augmentation on photos that contain corrosion.

- Perform image augmentation on photos that do not contain corrosion.

Correct answer: A, D, E

Question 7

A company wants to detect credit card fraud. The company has observed that an average of 2% of credit card transactions are fraudulent. A data scientist trains a classifier on a year's worth of credit card transaction data. The classifier needs to identify the fraudulent transactions. The company wants to accurately capture as many fraudulent transactions as possible.

Which metrics should the data scientist use to optimize the classifier? (Choose two.)

- Specificity

- False positive rate

- Accuracy

- F1 score

- True positive rate

Correct answer: D, E

Question 8

A growing company has a business-critical key performance indicator (KPI) for the uptime of a machine learning (ML) recommendation system. The company is using Amazon SageMaker hosting services to develop a recommendation model in a single Availability Zone within an AWS Region.

A machine learning (ML) specialist must develop a solution to achieve high availability. The solution must have a recovery time objective (RTO) of 5 minutes.

Which solution will meet these requirements with the LEAST effort?

- Deploy multiple instances for each endpoint in a VPC that spans at least two Regions.

- Use the SageMaker auto scaling feature for the hosted recommendation models.

- Deploy multiple instances for each production endpoint in a VPC that spans least two subnets that are in a second Availability Zone.

- Frequently generate backups of the production recommendation model. Deploy the backups in a second Region.

Correct answer: C

Question 9

A news company is developing an article search tool for its editors. The search tool should look for the articles that are most relevant and representative for particular words that are queried among a corpus of historical news documents.

The editors test the first version of the tool and report that the tool seems to look for word matches in general. The editors have to spend additional time to filter the results to look for the articles where the queried words are most important. A group of data scientists must redesign the tool so that it isolates the most frequently used words in a document. The tool also must capture the relevance and importance of words for each document in the corpus.

Which solution meets these requirements?

- Extract the topics from each article by using Latent Dirichlet Allocation (LDA) topic modeling. Create a topic table by assigning the sum of the topic counts as a score for each word in the articles. Configure the tool to retrieve the articles where this topic count score is higher for the queried words.

- Build a term frequency for each word in the articles that is weighted with the article's length. Build an inverse document frequency for each word that is weighted with all articles in the corpus. Define a final highlight score as the product of both of these frequencies. Configure the tool to retrieve the articles where this highlight score is higher for the queried words.

- Download a pretrained word-embedding lookup table. Create a titles-embedding table by averaging the title's word embedding for each article in the corpus. Define a highlight score for each word as inversely proportional to the distance between its embedding and the title embedding. Configure the tool to retrieve the articles where this highlight score is higher for the queried words.

- Build a term frequency score table for each word in each article of the corpus. Assign a score of zero to all stop words. For any other words, assign a score as the word’s frequency in the article. Configure the tool to retrieve the articles where this frequency score is higher for the queried words.

Correct answer: B

Question 10

A global company receives and processes hundreds of documents daily. The documents are in printed .pdf format or .jpg format.

A machine learning (ML) specialist wants to build an automated document processing workflow to extract text from specific fields from the documents and to classify the documents. The ML specialist wants a solution that requires low maintenance.

Which solution will meet these requirements with the LEAST operational effort?

- Use a PaddleOCR model in Amazon SageMaker to detect and extract the required text and fields. Use a SageMaker text classification model to classify the document.

- Use a PaddleOCR model in Amazon SageMaker to detect and extract the required text and fields. Use Amazon Comprehend to classify the document.

- Use Amazon Textract to detect and extract the required text and fields. Use Amazon Rekognition to classify the document.

- Use Amazon Textract to detect and extract the required text and fields. Use Amazon Comprehend to classify the document.

Correct answer: D

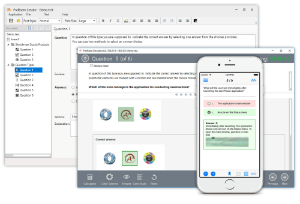

HOW TO OPEN VCE FILES

Use VCE Exam Simulator to open VCE files

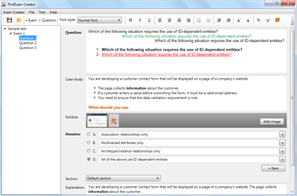

HOW TO OPEN VCEX FILES

Use ProfExam Simulator to open VCEX files

ProfExam at a 20% markdown

You have the opportunity to purchase ProfExam at a 20% reduced price

Get Now!